Google ranking in action: what factors matter more?

- Inbound links and reputation

- Behavioral ranking factors

- Domain authority VS content quality

- Why can low-grade websites get into the Top 10?

Unlike the situation in SEO ten years ago, black-hat magic now hardly works. Paid links won’t bring the page to the Top and could even hurt rankings. AI algorithms are more likely to choose high-grade resources, and Google’s patents confirm this trend.

Googlebots tirelessly crawl websites and supply vast amounts of data to machine learning systems. By absorbing information, Artificial Intelligence improves itself, and AI-drawn conclusions are now barely different from those of human experts. Nevertheless, before the next core update is put into practice, the quality of new search results needs necessarily be judged by external raters. According to their opinions, Google launches or doesn’t launch new features and implements the algorithms updates.

To keep up with Google Core Updates and track changes on the search results page, it’s enough to follow this page, where Google officially announces changes in the ranking algorithms.

Inbound links, website authority, and trustworthiness

The amount and quality of the page’s inbound links plus the domain’s backlink profile are strong signals of the page’s importance to search engines. Link equity helps to compare pages of one site with each other and stand them out against the competitors.

In Google, the algorithm PageRank is responsible for handling the link equity. From 2000 to 2016, users could see the page value in the browser toolbar, and then the PR-meter was deprecated. Making it public was considered a mistake because such transparency has led to a significant increase in garbage links.

However, the Google PageRank is still alive: the respective patent was re-registered in 2017 and will be valid until 2027. So, should we try to replicate the calculations? No, due to its low priority. Without reference to other factors, the PR is useless for making ranking forecasts.

Authoritativeness and Trustworthiness mostly refer to a domain and indicate its reliability, power of influence, and depth of connection with other relevant resources. There are several reputation-related metrics: Moz’s Domain Authority, Majestic’s Topical Trust Flow, etc., but we’ll skip them. They help analyze the SERP, yet surely Google has its own computational models for such concepts.

How can we think of link flow? It’s easy to imagine that link equity and website credibility can accumulate and are then redistributed by the link flow. The more reputable websites refer to you, the more trust you get from the search engines, and the higher position you take on the Results Page (SERP). Conversely, if a website has poor links or none at all, it could be difficult to prove its worth and relevance to the topic.

How can we think of link flow? It’s easy to imagine that link equity and website credibility can accumulate and are then redistributed by the link flow. The more reputable websites refer to you, the more trust you get from the search engines, and the higher position you take on the Results Page (SERP). Conversely, if a website has poor links or none at all, it could be difficult to prove its worth and relevance to the topic.

That means a newbie site’s homepage won’t immediately surpass the reputable competitors in Web Search. It’s hardly possible to build natural links quickly, and the algorithms won’t believe in artificial ones.

But it’s too early to talk about total defeat — there are many more ranking factors!

To start, site owners can optimize content for low-volume keywords. If Google considers the page relevant to the query and the user’s intent, the chances of it entering the Top 10 are super high.

So, suppose the page has just appeared at least in the Top 12 search results for a specific location. And then...

Behavioral ranking factors (user experience and response)

Then the behavior factor comes into effect. No matter how inconsistent googlers’ cues were, practice convinces (and patents indirectly confirm) that user actions on the SERP and later on the page may affect the rank modification.

An example: the actions of the visitor who has just returned from the website to the SERP might tell the search engine whether the site met expectations and whether the search results were satisfactory. But who measures satisfaction?

The RankBrain algorithm (see possible patent). Of course, it doesn’t read the minds of visitors but examines the context and analyzes user interactions with SERPs. Along with Panda, Penguin for spam filtering, and Pigeon for local search, RankBrain strengthened the complex Google algorithm Hummingbird. But a year after the rollout, in 2016, it began to handle the majority of Google searches and became a standalone entity.

The RankBrain algorithm (see possible patent). Of course, it doesn’t read the minds of visitors but examines the context and analyzes user interactions with SERPs. Along with Panda, Penguin for spam filtering, and Pigeon for local search, RankBrain strengthened the complex Google algorithm Hummingbird. But a year after the rollout, in 2016, it began to handle the majority of Google searches and became a standalone entity.

No one can say for sure what exactly the ranking engine takes into account when comparing trillions of pages. Still, it’s probable that some activity-oriented metrics have been implemented and may somehow affect the page ranking. They’re:

- click data for the given snippet and others on the particular SERP (click-through rate, wait time, etc.);

- deviation of the user’s behavior on the specific SERP from a typical scheme stored in their profile (1st-click rapidity, favorite positions, etc.);

- correlation between the user’s activity and interest in the topic (from the search history);

- the time the user spends on the landing page and the percentage of returns to the SERP.

Examples from the Google patent US8661029B1:“...user reactions to particular search results or search result lists may be gauged so that results on which users often click will receive a higher ranking...”

“...a user that almost always clicks on the highest-ranked result can have his good clicks assigned lower weights than a user who more often clicks results lower in the ranking first...”

Studies like this one from SEMrush show the direct correlation between site rankings and pages per session, as well as the inverse one between rankings and bounce rate (% of one-page sessions). That’s to be expected, but even so, the fact does not state the causal relationships.

Surely, there might be other rank-modifying factors. By the way, can Google (or Yandex) know about user actions outside the SERP? In theory, yes. Each of them can use its browser data and statistics from analytical scripts installed on most websites. Note: I do not say the page’s analytics affect the page’s rank. I can only assume that data may be used to distinguish user behavior patterns.

NB! The visitor interacts not only with the content of the site but with the interface first; if something slows the page down, anyone will be annoyed. Googlebot also doesn’t like to wait for the resources to load. That is why website performance and mobile friendliness are necessary to survive the web.

From the section, it’s easy to conclude that neither link equity nor link juice will help a page that is not interesting to the visitor. Meanwhile, useful webpages may get a slight head start and appear on the SERP in notable positions.

Domain authority VS content and code quality. What affects the page rank most?

The answer depends on query volume, niche competition, the user’s intent, location, and who knows what else. Naturally, everyone wants to see exact numbers, so I’ll roughly estimate them. But you can at least argue about the proportion.

For competitive high-volume search terms related to conversions, such as “buy a smartphone” or “website promotion”, the ratio is around the following:

- domain info and trustworthy characteristics provide 30% of success;

- incoming page links and relevance of the anchors to the query – 20%;

- content relevance, Schema.org markup, and physical proximity of the company to the user’s location – 20%;

- HTTPS protocol, site performance, and usability (especially on mobile devices) – 20%;

- users response – the rest 10% (implicit response: interaction with the site and actions on SERP; explicit: brand mentions, reviews on the Internet, etc.).

Why is only 20% devoted to the site code quality? Because websites selling those services or gadgets online are not too different in this indicator. In a more diverse business environment, the percentage would be higher.Why should we not rely too much on user feedback? To get feedback, we first need to lead the user from the SERP, and that’s not easy due to competition. An audience coming from other sources is unlikely to cause a rank boost.

In contrast to high-volume searches, long-tail ones (“looking for an extraordinary thing in the right color”) allow us to get on Top only due to comprehensive content and user satisfaction, unless the domain is new and its link profile is empty. But when the site grows out of the “sandbox”, to which the search algorithms are slightly biased, it might be easier to compete for niche-specific or long-tail terms.

Another encouraging thing: it’s possible to bring e-shop product cards to the Top 10 without building links for the domain and despite the authority of competitors. But there are some necessary conditions.

- The product description shouldn’t be copied from the manufacturer’s website and should contain important information, such as photos or videos, feature comparisons, warranty conditions, etc.

- The product is on sale; its card shows the price and has a Product with Offer Schema.org markup.

- There are testimonials from people who have already bought and used this product.

- All product pages are linked to each other and can be accessed from the category page in 1-2 clicks (for everyone, including bots).

- The store has been operating online for a while, and from external reviews, it’s clear that the e-shop isn’t fictitious and really delivers goods.

For some locations, it’s relatively easy, but it’ll be more difficult where Amazon, Ebay, and other marketplaces are strong. To be honest, I have no experience promoting e-commerce sites in “Amazon Basin” countries. Thus, I can’t advise whether to run your own e-store, or to open a branch on the marketplace and start optimizing it.

The hardest thing is to promote hub pages, such as product categories or the blog homepage. They can’t satisfy the user’s need; their mission is to direct users to the destination. Without an influx of incoming links and authoritative domain support, they’ll quickly lose equity and be unable to hold top positions.

About the high-quality content

Well, you believe in your skills and have decided to climb Olympus, relying on the perfection of your content. I add my full support to the idea! Let’s produce well-structured content with clear headlines and descriptive illustrations.

The quality of the content is crucial, but there are nuances. To prevent misunderstandings with Google about quality, you could always use the General Guidelines and this post about core updates, which contains an explanation of E-A-T concepts in the style of questions-to-myself.

The quality of the content is crucial, but there are nuances. To prevent misunderstandings with Google about quality, you could always use the General Guidelines and this post about core updates, which contains an explanation of E-A-T concepts in the style of questions-to-myself.

In short, Expertise-Authority-Trustworthiness means that writing well is not enough; the author should become a subject expert in advance. That is especially true for Your-Money-or-Your-Life websites, which can affect the visitors’ health and financial stability.

With the August 2018 Medic Update, the expertise of medical content creators became a necessary ranking factor for Google. Articles on health and nutrition not backed by the professional authority of the author or reviewer (a doctor, therapist, or scientist) are no longer considered reliable. In other niches, poor-quality content may still be helpful for users; some exceptions to the E-A-T rule are mentioned here.

Anyway, the search engine doesn’t have the expertise to verify the accuracy of newly emerging information and thus needs confirmation from the outside. To avoid letting the user down, Google tries to check the authors’ background and confirm their experience.

But even reputable authors must adhere to content accuracy because the search engine will still refer to the knowledge base. If a lot of things don’t add up and the author has no explanation, the article will be considered to be of poor quality. Studies disproving prevailing opinions are quite another matter. A lot will depend on the publisher’s reputation and the community's interest in discussing the issue.

Now that the section is over, it’s worth referring to factors that are unlikely to be related to the quality score. I believe the optimal keyword density and percentage of unique text make no sense except intuitively. Artificially increased uniqueness won’t turn plagiarism into an original. The necessary non-unique phrases won’t worsen the rating of helpful tips. If you read, understand, and don’t consider the text spam, its keyword density is just optimal.

How can low-grade websites get into the Google Top 10?

If the engines are so wise, why do substandard resources sometimes appear among Google search results? And what are the rules for competing with intruders for leading positions?

The SERP for the particular query may be imperfect if the system cannot associate the new search term with an already known one or does not have enough unbiased data to analyze. Perhaps the topic is poorly represented on the Internet, or the industry as a whole has a desire to earn quick money and adheres to low standards.

Although individual sites may offer great content, there is no guarantee that they’ll take the leading positions.

Why? The ranking engine isn’t yet good at sensing what is well and what is ill on the topic. And so far, it operates according to some plan B that is vulnerable to manipulation.

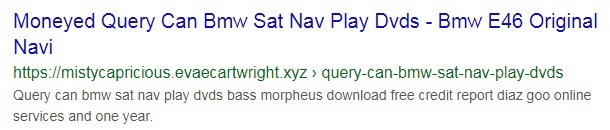

Here is a recipe for cheating the search engines and ranking well for low-volume, specialized search terms.

- Create a neat and modest webpage.

- According to the query, write human-readable headings.

- Stuff the sections with excerpts from relevant publications. It’s good if they contain related keywords.

- Let there be more text: automate the operation and fill several PC screens.

In sum, the text is pro forma relevant to the phrase, and the user scrolls, trying to figure out how the mess of familiar words can solve the problem.

A few years ago, you could safely make keyword-enriched text low-contrast or show it in small print at the bottom of the page. Since then, search algorithms have grown wiser and stopped ranking highly what people are unlikely to see.

One more good thing is that Google bots understand JavaScript and can anticipate the URL replacement. That’s why malicious redirects of users coming to the over-optimized page are now harder to implement but still possible. For example, they might pretend to be a voluntary user’s decision.

If the site seems suspicious, it’s better not to click anything but the browser back button.

But in fact, even pushy doorways still exist and aren’t going to disappear while their ranking prospects are melting away. Below is a snippet for one such website: the page redirects right away, not even trying to look decent.

Why do the tricksters bother with URL replacement; can’t they just prepare two versions of the page? The first could show bots some potentially well-ranking text; the second could meet organic visitors, say, at the casino table

Yes, they can. That method is called cloaking; it violates white-hat guidelines and entails manual actions from Google. The risk of getting caught and being excluded from the index is huge. No doubt, the content substitution will one day become known, at least due to the opportunity to complain to Google. (But catching the cheater will be harder if cloaking is only used to stuff the content with keywords).

Update: What should we expect from the new BERT algorithm?

With the BERT algorithm’s implementation on October 21st, 2019, Google has begun to better understand phrases. This major update relies on the technique of bidirectional language processing, which helps AI dive deep into the context of a query and the intent behind it. When BERT is fully operational in all languages, we won’t need to arrange words, as we do when searching for (thing) (feature) buy (city). Besides, we can be more confident that the engine understands the meaning of prepositions and other supplementary parts of speech. UPD: As of December 9th, 2019, BERT already speaks as many as 70 languages!

Conclusions, quite optimistic

Anyhow, the main thing is that every single wrong blue link on the SERP harms the search engine’s reputation. Thence, Google is quite sincerely committed to providing quality search results. Over time, algorithms will be smart enough to separate the wheat from the chaff.

Until then, they can use a little help. Why don’t you offer them a sample of quality content for research? One day, your post will be appreciated and appear among the search results for a long-tail query. The chance to hook the user on SERP will be higher if you provide the page with a rich snippet.

And if the user notices you in the search results, lands on the page, and stays for a while, Google will sooner recognize that you are offering something worthwhile!